The 48-Hour Integration Test

A new outsourcing team signs its contract on a Monday afternoon. By Wednesday morning, the team has spent two days waiting for system credentials. Their questions sit unanswered in a Slack channel. The buyer's IT department, which processes internal onboarding requests the same day, has placed the outsourced team in a queue behind three other tickets. Nobody is deliberately obstructing anything. The bureaucracy is doing what bureaucracies do: triaging by proximity, and the team that sits in another building (or another country) loses.

That two-day wait is a signal, and it is a remarkably consistent one. After onboarding enough clients to see the pattern repeat, we have codified it into a framework we call the 48-Hour Integration Test. It tracks four observable signals in the first two working days of an outsourcing engagement. Each signal maps to a specific failure mode that tends to surface three to six months later if left unaddressed.

A caveat before we lay out the framework: we are describing correlations, not iron laws. A slow start may be a symptom of deeper organisational dysfunction rather than its cause. An engagement can survive a poor score on one or two signals if both parties catch and correct early. What we have observed, across our engagements, is that these four signals are the most reliable early indicators we have found, and that ignoring them consistently leads to the same outcome: a partnership that looked promising on paper but never gained traction in practice.

The four signals

Signal 1: System access speed.

How quickly does the outsourced team receive credentials, VPN access, and tool logins after contract signing? A team that is fully provisioned within hours starts producing work on day one. A team that waits two or three days starts with idle time, and idle time sets a precedent. The buyer's IT department learns, without anyone saying so explicitly, that the outsourced team is a lower priority than internal staff. That precedent compounds. Six months later, every software change, every new tool rollout, every permission update reaches the outsourced team last. Productivity drags, and the buyer blames the vendor for underperformance that originated in its own provisioning process.

The benchmark varies by organisation. A 30-person startup with cloud-based tools can reasonably provision access within four hours. A Fortune 500 with SOC 2 compliance requirements, multi-factor authentication protocols, and a formal security review process may need 24 hours, and that is fine. The signal is relative speed: how quickly does the buyer's IT team process external provisioning compared to internal requests? If the outsourced team's access request sits in a queue behind lower-priority internal tickets, that tells you something about where the engagement sits in the organisation's real priorities, regardless of what the contract says.

Signal 2: Internal response time.

When the outsourced team asks its first substantive question, how long before someone on the buyer's side provides a substantive answer? The outsourced team will ask questions in its first week. It is learning the client's specific processes, chart of accounts, exception-handling preferences, and reporting formats. A same-day response tells the team that its questions are taken seriously. A 24- to 48-hour silence tells them the opposite. The downstream effect is predictable: the team stops asking and starts guessing, which is how errors compound and exceptions go unreported.

A "substantive answer" is different from an acknowledgement. A reply that says "we're looking into it" resets the clock; it does not stop it. The benchmark is a response that gives the team enough information to act: a clarification of the chart of accounts, a confirmed exception-handling rule, a forwarded document. Four hours is a reasonable target for most organisations. Anything beyond a full working day is a warning sign.

Signal 3: First deliverable accuracy.

Does the first piece of work the outsourced team produces meet the brief? Whether the deliverable is a batch of processed invoices, a reconciliation report, or a set of categorised transactions, it reveals whether expectations are aligned. A clean deliverable gives the engagement a foundation. A miss, though, is diagnostic rather than damning. The type of miss tells you where the problem sits:

A miss caused by a briefing gap (the client's instructions were incomplete or ambiguous) predicts ongoing rework driven by unclear expectations. The fix is to rewrite the brief.

A miss caused by a process misunderstanding (the team followed the instructions but misread the client's chart of accounts or exception rules) predicts a training gap. The fix is a structured walkthrough, not a general reprimand.

A miss caused by a skills mismatch (the team lacked the technical ability to execute the task) predicts a staffing problem. The fix is to replace or supplement the team member, ideally before the pattern becomes entrenched.

Each type is cheapest to fix in the first week.

Signal 4: Communication protocol.

Has the working relationship established a clear, documented communication plan with agreed channels and response windows? This signal is less about which channels the team uses (Slack, email, Teams) and more about whether a deliberate structure exists. Async-only communication in the first 48 hours often predicts a relationship where trust develops slowly, because the outsourced team cannot ask the fast clarifying questions that prevent errors and the buyer's team cannot course-correct in real time. By month three, both sides are managing each other through formal channels rather than collaborating.

The distinction that separates a warning from a valid operating model is intent. Async-by-neglect (no communication plan exists, nobody has discussed response windows, messages go unanswered because nobody owns them) is a red flag. Async-by-design (the teams have agreed on channels, response windows, escalation paths, and a regular sync cadence, even if most daily communication is asynchronous) is a legitimate and often necessary structure, particularly for teams working across distant time zones. The test should look for a documented communication plan, not penalise a specific channel choice.

Vendor preparedness

The four signals above place the majority of accountability on the buyer, and rightly so: the buyer controls the onboarding environment. But framing integration as a one-sided test would be dishonest. The vendor has a proactive role that the framework should acknowledge.

Before day one, the vendor should have provided a clear prerequisites list: what systems it needs access to, what credentials are required, what documentation the team will need to begin work, and who the primary point of contact should be. A vendor that arrives on Monday expecting the buyer to have guessed what it needs has contributed to the delay it is about to measure. Similarly, when access or answers are slow, the vendor should follow up. A vendor that logs the delay but does not escalate it is collecting evidence, not solving problems. We include vendor preparedness in our own scoring, but as a contextual factor rather than a fifth signal. The reason is practical: the four signals are observable by the buyer on days one and two, with no vendor cooperation required. Vendor preparedness requires the buyer to evaluate something that happened (or failed to happen) before the engagement began, which makes it harder to score in real time. It belongs in the day-three review conversation, where both sides can assess each other's contribution honestly.

Scoring the test

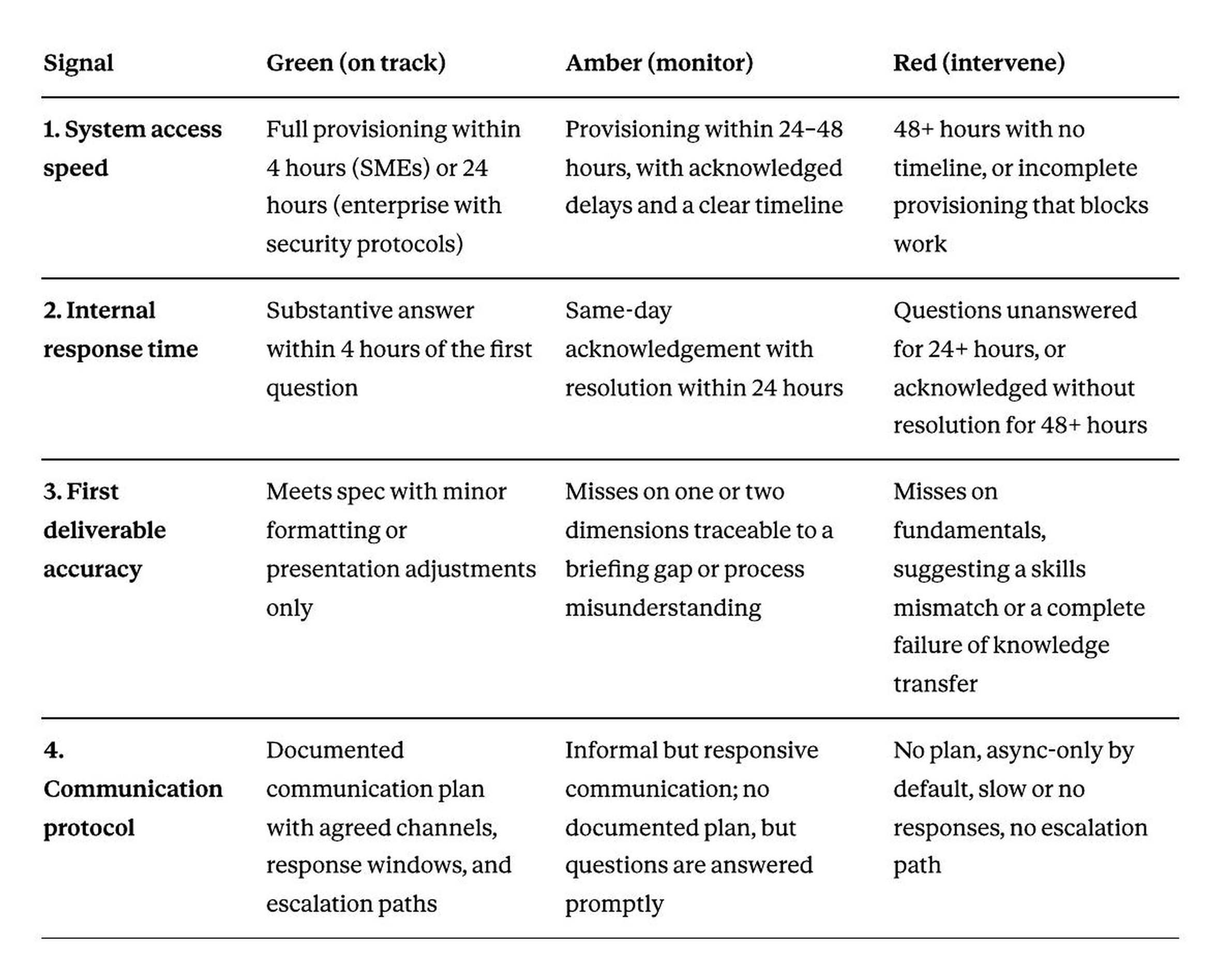

The matrix below provides a structured way to evaluate each signal. The thresholds are guidelines, not absolutes; adjust for your organisation's size, security requirements, and operating model.

Reading the scores:

Four greens in the first 48 hours do not guarantee a successful engagement, but they indicate that the structural foundations are in place. The engagement has earned the right to move forward without intervention.

Two or more ambers suggest that the onboarding process needs attention before small frictions become entrenched habits. The day-three review should focus on converting ambers to greens within the first two weeks.

Any red requires immediate intervention. A red on signal 3 (deliverable accuracy due to skills mismatch) is the most urgent, because it may indicate a staffing problem that cannot be solved with better communication or faster provisioning. A red on signals 1 or 2 typically reflects an internal process problem on the buyer's side, which is fixable but requires someone with authority to reprioritise the outsourced team's access.

How to run the test

The test requires no additional budget, tooling, or external facilitation. One person on the buyer's side (typically the project owner or operations lead) can run it alongside normal onboarding activities.

Day one. Note the time the contract is signed and the time the outsourced team receives full system access. Record the first substantive question the team asks, and note the elapsed time before it receives a substantive answer. Note which channel the answer came through (email, Slack, call, ticket) and whether a communication plan has been agreed.

Day two. Review the first deliverable against the original brief. Where it met spec, note it. Where it diverged, classify the miss: briefing gap, process misunderstanding, or skills mismatch. Check whether the outsourced team received and followed a prerequisites list, and whether the vendor followed up on any outstanding access or unanswered questions.

Day three. Share the scored matrix with both teams. The conversation that follows is the most valuable part of the test. It will surface assumptions that neither side had articulated, process gaps that would have compounded over weeks, and, in most cases, problems that are fixable right now at a fraction of what they would cost in month six.

What the test reveals about the buyer

The pattern we have observed across our engagements, consistently, is that the buyer's own behaviour dominates the scorecard. Three of the four signals are controlled by the buyer. The vendor controls deliverable quality. The buyer controls the environment in which that quality is produced.

This is not an argument that vendors bear no responsibility for failed engagements. A vendor that delivers sloppy work, arrives unprepared, or fails to follow up on outstanding access will fail regardless of how quickly the buyer provisions credentials. But rigorous vendor selection followed by careless onboarding produces the same outcome as no selection at all. The investment in evaluation, paid pilots, and well-run RFPs is wasted if the buyer's internal team does not follow through.

A sceptical reader might point out that this framework simply describes good onboarding practice for any new team, internal or external. In part, it does. But outsourced teams face a structural disadvantage that internal hires do not: they sit outside the organisation's default communication flows, IT provisioning queues, and informal trust networks. The signals that would be mildly inconvenient for an internal hire (a day's delay in system access, a slow response to a first-week question) are compounding problems for a team that has no corridor conversations, no adjacent desks, and no pre-existing relationships to fall back on. The 48-Hour Integration Test makes those compounding problems visible before they become expensive.

We run this test as part of every new engagement. To book a free Back-Office Audit, visit ledgeris.com/contact.

A controller at a 50-person company spends Tuesday morning reconciling three vendor accounts, reviewing 40 invoices, and preparing a board report. On Wednesday, the VP of Finance asks her to model a pricing change that could add $300,000 in annual margin. She opens the spreadsheet, gets two rows into the analysis, and a vendor calls about an unpaid invoice. The pricing model sits untouched until the following week. By then, a competitor has already moved.